Tech companies across the globe continue to grapple with a landscape of overlapping and complex regulatory regimes as a result of the intensifying growth of new and emerging technologies. Most recently, Australia has taken another step in the race to regulate, with the publication of a report on Human Rights and Technology (the Report) by the Australian Human Rights Commission.

Beyond its 38 ambitious recommendations, the Report also reflects a broader trend in Australia towards:

- articulating how existing legal frameworks can be applied more effectively to new technology – in this case, how Australia’s current human rights protections have a role to play in regulating the development and use of AI; and

- increased regulation of automated and algorithmic technologies through the introduction of new legal obligations aiming to make those technologies more transparent and comprehensible.

It follows the Federal Government’s introduction of the News Media Bargaining Code earlier this year, which imposed new requirements on designated digital platform services to disclose certain data to news businesses and notify them of significant changes to algorithms, and a broader Commonwealth regulatory focus on digital platform regulation and algorithm transparency.

REGULATING AI DECISIONS

The Report is specifically concerned with decisions and decision-making processes that are materially assisted by AI, and which have a legal, or similarly significant, effect for individuals. It seeks to address the problem of “black box” or “opaque” AI, which can obscure the reasons for a decision and frustrate the ability to examine its merits or lawfulness, by proposing a regime that will increase transparency and “explainability” of AI decisions.

The Commission warns against “algorithmic bias”, where an automated system may produce unfair or unlawful decisions which discriminate against individuals on the basis of a protected attribute such as race, age or gender. Although the risk of discrimination in decision-making is not new, it can be harder to detect when decisions are made by black box AI tools.

The Report does not recommend any prohibition on AI-informed decisions but focuses instead on their transparency and explainability. This is a different approach to the EU’s General Data Protection Regulation, which currently prevents individuals (with some exceptions) from being subjected to a decision “based solely on automated processing” where that decision produces a legal or similarly significant effect. The Report’s discussion of “high risk” AI systems, without proposing specific laws to address these matters, also raises similar issues to those contemplated in the EU Commission’s recent regulatory proposal. That involves introducing obligations for pre-product launch testing, risk management and human oversight of high risk AI, with maximum penalties of EUR 30 million or 6% of total worldwide annual turnover for non-compliance.

IMPLICATIONS FOR THE PRIVATE SECTOR

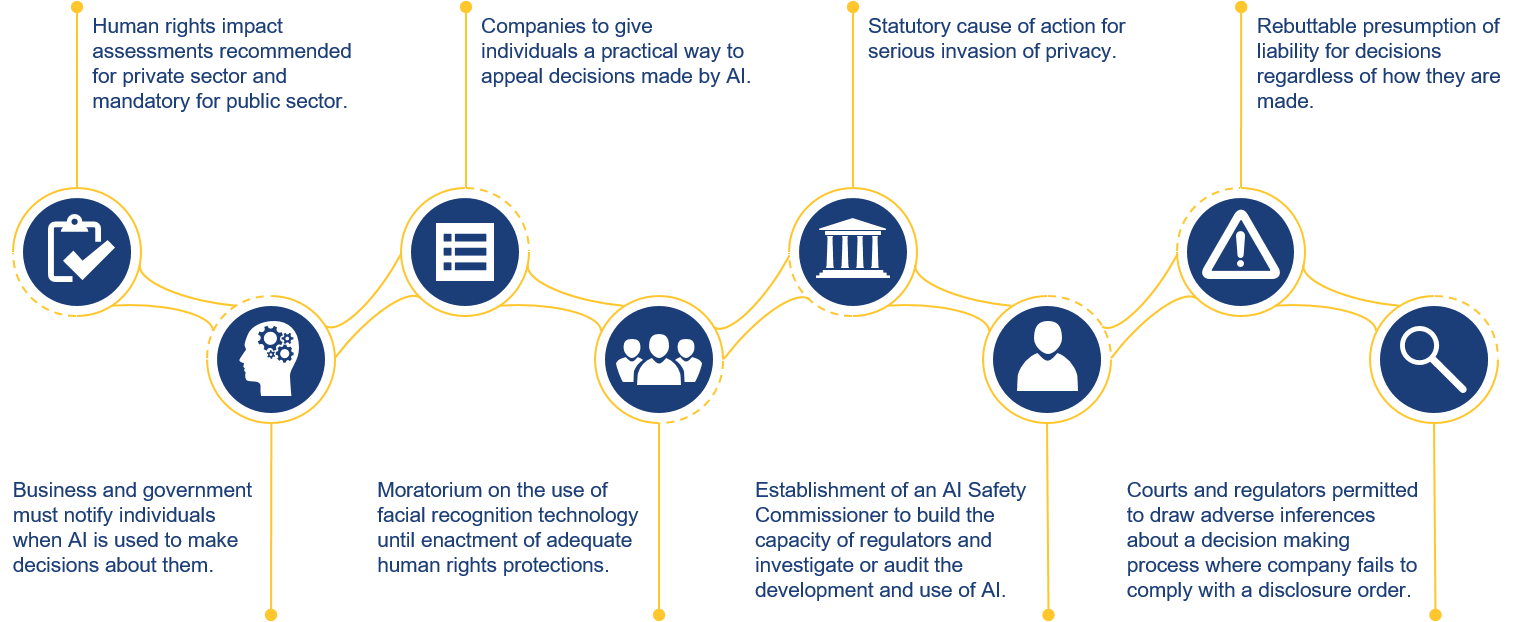

The key recommendations in the Report which have implications for the private sector include:

Click here to see our blog post on this, which provides a more detailed overview of the recommendations.

PRACTICAL GUIDANCE FOR TECH COMPANIES

AI design and development

- Consider suitability of AI decision tools in high risk areas. In light of the proposed rebuttable presumption that companies are liable for decisions (including automated or AI decisions) regardless of how they are made, companies should evaluate and seek advice on the risks of using AI decision-making in high risk areas or which may result in unlawful discrimination (for example, use of AI-assisted employment tools to assess suitability of job candidates, where decisions could be based on protected attributes such as age, disability or race).

- Consider human rights issues at key stages. In line with the Report’s recommendation to implement human rights impact assessments, companies should consider establishing frameworks for evaluating the human rights implications of new AI tools, to ensure potential human rights risks are considered early in the product design stage. Post-implementation testing and monitoring of AI should also occur, to identify trends and potential issues which may be emerging in AI use despite best intentions at the design stage.

- Expect greater transparency and explainability requirements. AI designers should also anticipate the introduction of requirements for companies to disclose explanations for AI decisions, for example, in court or regulatory proceedings.

- Be prepared for regulation of emerging technologies. Companies should anticipate that future regulations will target the outcomes produced by technology, so will also extend to new and emerging technologies, including those in testing and development.

Increased disclosure requirements and regulatory scrutiny

- Anticipate increased regulatory scrutiny. Companies should be preparing for increased regulatory scrutiny of AI in the form of monitoring and investigations, by both existing regulators and the proposed new AI Safety Commissioner.

- Invest in systems and processes for new procedural rights and disclosure requirements. Companies should also plan for and invest in systems to address likely increased customer-facing processes and disclosure obligations regarding AI decisions – specifically, notifications to individuals when AI is materially used to make decisions that affect their legal or similarly significant rights, and a process for effective human review of AI decisions (by someone with the appropriate authority, skills and information), to correct any errors that have arisen through using AI.

Key contacts

Legal Notice

The contents of this publication are for reference purposes only and may not be current as at the date of accessing this publication. They do not constitute legal advice and should not be relied upon as such. Specific legal advice about your specific circumstances should always be sought separately before taking any action based on this publication.

© Herbert Smith Freehills 2024